Victim in critical condition

deleted by creator

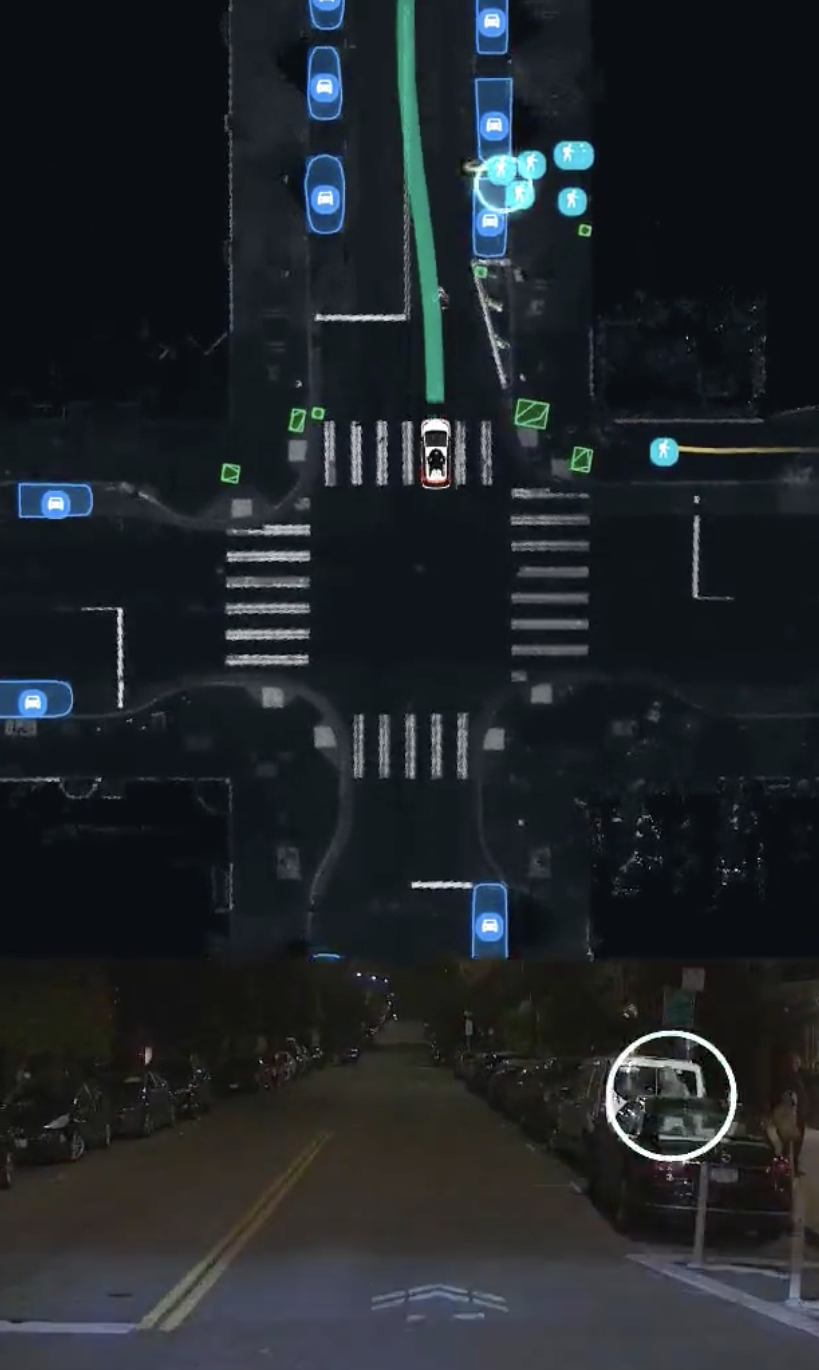

In that screenshot, you can see a pedestrian is moving towards the road (but not yet on the road proper, he is still between two parked cars) and the Cruise has already decided to illegally drive with two wheels on the wrong side of the road, over a double yellow line, to avoid driving close to the pedestrian.

Two seconds later in that video the pedestrian has run into the path of the driverless Cruise car, and the car has stopped. You can view the full video here:

This is a completely different incident.

Why would you just run across the road like that without looking?

Pretty sure they were gunning for insurance fraud.

One of the big problems with how organisations often work, especially private businesses, is the extremely casual attitude to product testing and risk assessment. It’s only after they spend shitloads on lawyers and public relations that they are suddenly able to prioritise creating jobs dedicated explicitly to preventing the damage they cause with that attitude.

But because law-making is slow, weighed down by flawed human power structures combined with legitimately necessary procedures, the only thing businesses need to do is to outpace the speed of law change to avoid being punished. Outpacing the law has been easy enough to do at the best of times, but with half-assed exploitative software development in a rapidly progressing robotics and ‘AI Boom’ environment, it will only get easier and hurt more people.

And then the executives who allowed their shoddy products to hurt people will just change employers, likely for a pay raise or just selling the business outright. The only consequences for their reckless management personally are a few late nights in a bad mood. All because limited liability meant they might as well have just been an innocent bystander.

Meanwhile the victims - if they survive, are left in lifelong pain and misery, because courts ruled that the law doesn’t cover their novel situation. Not to mention the damage to their families and communities.

Globally, we need to start holding individual organisation decision-makers to personal account for the damage their decisions cause. Both financial and prison-time, for both environmental and human damage. I mean like “Board of Directors and all Chief Officers of Cruise on trial for negligent homicide” levels of responsibility. It’s the only way to prevent this kind of unnecessary suffering.

tl;dr

1. Risk of personal loss is the only way people in power will prioritise building safer products.

2. We need the law to catch up faster to a world where humans can offload more life-changing decisions to computers.

3. Law-makers should start assuming we live on the Star Trek holodeck in a Q episode instead of the Unix epoch, if they are going to catch up on their huge backlog.

4. People need to start assuming their code is imprecise and dangerous and build in graceful failures. Yes, it will be expensive in a time-sensitive environment, important things often are.I’ve read about the cruse team, and “extremely casual attitude to product testing” does not accurately describe what they are doing. The cruse vehicles have a much lower and less severe accident rating than human drivers, and have logged millions of road miles without seriously injuring anyone (until now).

Unlike a certain narcissistic auto manufacturers owner…

Oh, did they actually release data and had an independent research group analyse it? Or is this a statement from their PR department? It’s easy to be better than the average human driver if you only drive in good weather and well built roads.

Tesla always makes big claims about how safe it is, but to the best of my knowledge never actually released any usable data about it. It would be awesome if cruise did that.

‘Less shit than the average human at it’ is a really low bar to set for modern computers, even if Tesla fails at that poor standard and Cruise is currently top of the game. We still need much higher bars when we’re talking about entirely automated systems which are controlling speedy large chunks of metal, or even other smaller-scale-impact-and-damage systems. Systems which can’t just hop out, ask if the victims are OK, render appropriate first aid, accurately inform emergency services, etc.

The more automation, the higher the standards should be, which means we need to set legal requirements that at least try to scale with he development of technology.

I disagree. Human drivers kill over 40,000 Americans a year. If there’s an alternative that kills less than 40,000 a year we should take it. Ideally mass transit but America seems to like cars.

I wasn’t suggesting stopping the development of automated vehicles because it’s impossible to have 0 damage. I was advocating having high standards for software/hardware development and real consequences for decision-makers trying to find shortcuts.

Progress and standards are not mutually exclusive.

Good thing it has some good amou of context. But I feel like this kind of incident can only be better analyzed with images and simulations of what happened.

According to reports, the pedestrian was illegally crossing the road at a traffic light with a red light for the pedestrians and a green light for the cars.

The human driven car presumably didn’t see the pedestrian and hit her at high speed, she then bounced into the path of the autonomous car. The autonomous car, which was empty, slammed on the brakes but could not stop in time.

Would a human have stopped in time? That would depend on the human…

I think the problem here is more that it parked on her after hitting her. Presumably a human wouldn’t have done that.

Do you think it would have been better to continue driving over someone? What would you like the car to do after a person is thrown under it? Hover mode?

It would have been better to not PARK ON HER LEG. They had to lift the car off her.

It couldn’t have avoided hitting her, but it could have not stopped ON her.

Don’t let the perfect be the enemy of the good. Going “I just hit someone, I’m going to shut down everything and wait for people to come solve this” is a better general approach than “I just hit someone, better back up to make sure I’m not parked right on top of them.” That second approach could lead to the vehicle dragging the victim, driving over them a second time, or otherwise making things much worse.

Driving over someone’s leg a second time is a great way to make sure it’s broken.

It’s not like the car can’t be controlled, if driving off was deemed the correct action, they could have gotten them to over-ride and drive the car off. Driving off is almost never the recommended action in these cases.

Lifting off is by far the safer choice.

Once it’s already parked on the leg, sure. But during the accident continuing on in the initial impact to go over the leg (hopefully with just the front tire. Would’ve been preferable. But also that’s a very weird edge case that i imagine there were no sensors for, and a human could’ve made the exact same mistake in trying to brake before hand but not quite making it and inadvertently parking on the victim instead.

Not from what I’ve seen on some gore videos

Both vehicles started moving after traffic lights turned green

How was either vehicle going so fast that “breaking aggressively” wasn’t enough to stop them immediately after accelerating from a red light….?

This makes no sense without a video

Can we use Elon as the stand in for the victim?

Not to downplay this situation because it is a bad situation regardless but looking at the multiple articles that they have released on this and the lack of video that they have provided regarding it, without seeing the video it’s hard to say who’s at fault here. Well aside from the driver who hit and ran obviously.

It sounds like both vehicles had a green light at the intersection and I expect the second lane wouldn’t have been able to see the pedestrian that was crossing in the first Lane to begin with. The article States the vehicle when it saw the pedestrian “braked aggressively” in order to try to not hit the person. I don’t think that this is as simple as a oh there was a person waiting at the crosswalk so it shouldn’t have gone, a lot of these intersections also have pedestrian lights on both sides of the crosswalk. Every article is blaming the autonomous vehicle, but I really don’t think an actual driver would have done things differently given what has been released. In fact they might have actually made things worse by immediately driving off the person’s leg/ankle instead of waiting for the lift.

I’ll be interested to see footage when it’s released cuz I’m curious this was an actual mistake on the autonomous vehicles part or if this was a it did what it could not actually being able to be avoided

A car driven by a human is unlikely to need firefighters to lift the vehicle up to get at the woman pinned by its tire. Even if they’re good at general driving they have an unfortunate habit of making emergencies worse.

Then again, car driven by a machine won’t text and do makeup at the same time. I’ve seen so many idiots in traffic it hurts to try and remember them all. That said humans are pretty good at driving, unless they start acting like idiots and do something else while driving.

Just today the Some More News folks put out a great breakdown of a lot of the current issues and shady shit going on in the AI car industry.

I think most computer vision cameras are fairly low resolution, so I’m not expecting the hit-and-run vehicle will be identified.

I’m under the impression they rely on lidar or radar even more than video/vision. I’m talking out of my ass though, I don’t actually know

Doesn’t sound as bad as the other headline that was lurking in another magazine. I do think it’s an unexpected situation and the AI handled it as best as it could.

It couldn’t avoid her, no. The bigger problem was that the car parked on her, I think.

“Do nothing” is usually not that bad an approach to dealing with an unknown situation. I could easily see a situation where trying to back away from the person you just hit would increase the damage.

As other comments have suggested, we should wait for the video before judging whether this was really a bad choice by the autonomous car.

“Do nothing” is usually not that bad an approach

That doesn’t get any truer even if you repeat it a few more times.

Truth is that a general approach was not sufficient here. This cars programming was NOT good enough. It has made a bad decision with bad consequences.

And “no it isn’t” isn’t a very convincing argument to the contrary.

Yes, in this particular case, maybe the car should have moved a bit. I’m talking about the general case. What are the odds that a car happens to come to a stop with its wheel exactly on top of someone’s limb, versus having that wheel finish up somewhere near the person where further movement might cause additional harm? And how can the car know which situation it’s currently in?

What are the odds

Wrong question.

If you want autonomous cars outside in the real world (as opposed to artificial lab and test scenarios), then they have to deal with real world situations. This situation has happened in reality. You don’t need to ask about odds anymore.

how can the car know which situation it’s currently in?

That is an engineering question. A good one. And again one of these that should have been solved before they let this car out into the real world.

This situation happened, yes. Do you think this is the only time that an autonomous car will ever find itself straddling a pedestrian and need to decide which way to move its tires to avoid running over their head? You can’t just grab one very specific case and tell the car to treat every situation as if it was identical to that, when most cases are probably going to be quite different.

deleted by creator

I find this bizarrely reminiscent of Brandon Lee’s death.