Found it first here - https://mastodon.social/@BonehouseWasps/111692479718694120

Not sure if this is the right community to discuss here in Lemmy?

Not as bad as the AI-generated articles showing up in search results. Some websites I get driven to make absolutely no sense, despite a lot of words being written about all kinds of topics.

I’m looking forward to the day when “certified human content” is a thing, and that’s all search engines allow you to see.

I’m looking forward to the day when “certified human content” is a thing, and that’s all search engines allow you to see.

I can’t wait for that. I get the feeling it’s gonna get real messy before we figure out solutions to all the problems caused by AI-generated content.

I mean yeah, there’s already plenty of human-generated misinformation and shit, but it seems to me (not an expert) like ai is capable of fucking with society on a whole new scale.

The big difference is that high quality human generated content is often based on reputation, a history of quality content, and frequently reviewed by experts in the field (very common for medical articles).

But AI has none of that. It’s 100% quantity over quality, and that’s just internet pollution as far as I’m concerned.

We really do have to figure something out, though.

https://mashable.com/article/world-of-warcraft-wow-reddit-ai-glorbo

Reddit already tricked a bot into writing an entire article when they noticed a website was clearly scraping /r/wow

She couldn’t be bothered to get a single screenshot of the article?

Maybe it’s a bot lol

deleted by creator

China?

The whole of Pinterest, tiktok and Instagram are using it to make shorts.

deleted by creator

Not to defend China in any way, but its influence over the world is far more economic and profit-driven than militaristic. Often it’s hard to separate China from for profit corporations simply due to how much those corporations rely on chinese industries.

deleted by creator

Look, I’m Latin American. If you are gonna tell me US is more trustworthy and less likely to attempt to undermine other countries and inflict inhumane conditions upon them, I’d suggest for you to read a bit more international history and all the dictatorships they have backed in other countries.

Do US corporations have any accountabilty? These days, looks like that only applies to their shareholders. I remember those leaks revealing that the US government has more access to internet company data than it lets on.

Still, I wouldn’t want to live in China either. But as far as getting screwed by companies from other countries, I don’t see much reason to trust one over the other. Any rights and protections that you may have do not apply to me.

All that considered, between Tiktok or Instagram? They are equally bad as far as I see.

if you look up anything rooting or custom related, those sites seem to be half of what comes up

Yeah, a lot of repair sites come up with pages that have just hundreds of Q&A’s, but often times they don’t make sense or aren’t even related to the topic! Once you realize how much time was wasted on these garbage sites, you don’t even feel motivated to keep looking for answers.

They’ll just make certification so expensive only the wealthy will qualify.

You’ll never hear another perspective again.

Or, you know, we go back to the time when the news media had real gatekeepers and not just any random jackass could churn out some bullshit copy and broadcast it to the world, let alone have it get published by their local paper.

It’s nice that the Internet has democratized access to a national or even global audience, but let’s not pretend for a moment that it hasn’t caused a ton of problems in the process such that now many people have no idea of what to believe while others believe whatever they want.

It’s still pretty easy to tell the difference. You have to have a pretty low level of media literacy to not be able to easily spot it. Unfortunately we already know that most people don’t have a clue when it comes to mass media, and even if they did, we also know that people tend to believe whatever reinforces their priors.

For now, just like it was easy to identify AI art by the fucked up hands for a few months before that was mostly ironed out. AI really doesn’t need to get that much “smarter” to start fooling people in their native tongue, it just needs to be able to string the right words together more often. And there’s a few billion guinea pigs out there to test on.

I mean, they would have started appearing in there from the first moment that someone created one and hosted it somewhere, no? So it’s already been a thing for a couple years now, I believe.

Yeah but AI is a buzz word and hating it is fun at the current moment!

Well it is pretty shitty though. It needs conscousness and feelings. That crap out there is barely AI.

I’m wondering if we give AI consciousness is it more likely to identify humans as a threat to the Earth and try to eliminate us or would it empathize with it’s creators? Seems risky…

Humans are not a threat to the Earth. Do you mean that humans are a threat to the environment? That would mean that we’re a threat to ourselves. It wouldn’t make sense to destroy us to save us from ourselves.

What if the ai towed all the humans beyond the environment?

Into another environment?

This line of thinking assumes it would prioritize Earth exclusively over humans, which is only likely if the AI is created with that specific intent.

Doesn’t need to be super advanced AI to be used as a tool by irresponsible or malicious humans.

deleted

Pretty soon, stupid shit Musk does will start being posted here.

Whaddya mean nearly every tech article posted here are variations of “Elon bad upvotes to the left”

deleted

Lol at this account spamming AI related posts with angry unintelligible comments and trying to bait people into arguments

Nothing like the thrill of being part of an angry mob! All the dopamine of righteous fury, none of the responsibility.

I doubt you would find them as a top result. Sure it would be somewhere in the results, but with the scale it can become an actual problem

AI generation sites about to become Pinterest 2.0 for clogging up search results.

I hate so much how pinterest occludes and pollutes google images 🙄

Why would they not? There’s no way for such a system to know it’s AI generated unless there’s some metadata that makes it obvious. And even if it was, who’s to say the user wouldn’t want to see them in the results?

This is a nothing issue. It’s not like this is being generated in response to a search, it’s something that already existed being returned as a result because there is assembly something that links it to the search.

To put it bluntly: this is kind of like complaining a pencil drawing on a napkin showed up in the results.

There’s no way for such a system to know it’s AI generated unless there’s some metadata that makes it obvious.

I agree with your comment but just want to point out that AI-generated images actually often do contain metadata, usually describing the model and prompt used.

By the time a user has shared them, 99% of the time all superfluous metadata has been stripped, for better or worse.

That’s fine for looking up cat pictures or porn, but many people are searching for information contained in images, and that is a problem. What if you were looking for a graph, a map, a blueprint, etc.? How do you discern the real from the fake? What if you click through and the image seems to come from a legit source that is also generated?

You’re missing the point: How would a search engine discern the real from the fake?

Its time to start talking about “memetic effluent.” In the same way corporations polluted our physical world, they’re pollution our memetic world. AI spewing garbage data is just the most obvious way, but corporations have been toxifying our memetic space for generations.

This memetic effluent will make sorting through data harder and harder over the years. But the oil and tobacco industries undermined science and democracy for decades with it’s own memetic effluent in order to protect their business for decades. Advertising is it’s own effluent that distorts and destroys language. Jerry Rubin said it in 1970, “How can I tell you ‘I love you’ after hearing ‘cars love shell?’”

While physical effluent destroys our physical environment making living in the world harder, memetics effluent destroys meaning and makes thinking about and comprehending the world harder. Both are the garbage side effects of the perpetuation of capitalism.

This example of poisoning the data well is just too obvious to ignore, but there are so many others.

It’s interesting, because the idea is basically that knowledge and ideas should be constructive, so as not to pollute the sum of human knowledge.

So that raises the question, what is the constructive conclusion to “memetic effluent”? Without one, is the concept itself an example of such effluent?

It also raises the very thorny issue of who adjudicates what is and is not “memetic effluent.”

Yes, but the answer here is Google. Google is already making these calls, whether or not we get to discuss it.

“One man’s trash is another man’s treasure,” as the saying goes.

I don’t think that’s the implication here. Following the metaphor, pottery and arrow points have been waste products for a while. Prior to the industrial revolution, and specifically prior to the chemical revolution, industrial waste streams haven’t been as major of a problem (ignoring cholera for a bit). It’s been the development of selling chemicals for profit and the extensive use of petroleum that’s really caused massive problems threatening humanity as a whole.

The implication then is that people should be responsible for their memes. Corporations are inherently irresponsible because there exit economic incentives to externalize costs, be that environmental or informational. AI garbage as a waste stream would be fine if the data was clearly labeled as such. Unfortunately at least some AI garbage is intended to be deceptive. There exists an economic incentives to produce AI garbage that is hard to distinguish from human output. Since AI garbage can be produced at an industrial scale, there’s a massive waste data stream that’s able to overload the systems we’ve built to parse and organize data.

There are probably a lot more implications here, but “what are we doing with our information world” is something worth thinking about before we make it completely unusable.

This feels like the precursor to the information Apocalypse referenced in the comic Transmetropolitan.

Well, of course. The search algorithm has no way to know the difference.

AI generated images often contain model and prompt metadata so in fact it could potentially tell the difference. Not that that should necessarily mean the image should be excluded.

Screenshot and its gone

deleted by creator

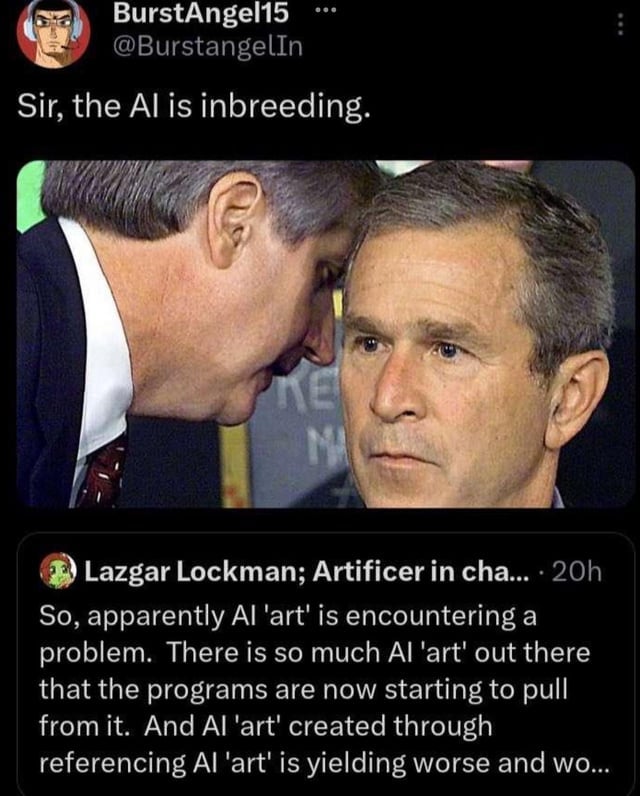

The AI centipede

This was exactly what I was about to say!

If they scrape data from the Internet to train the AI, but it’s taking up all this shitty AI gen stuff then it’s going to train itself to be worse lol

Google is a search engine, it shows stuff hosted on the Internet. If these AI generated images are hosted on the Internet, Google should show them.

Except is VERY heavily weights certain sources.

That’s a completely different topic though.

Not really. However much Google might index everything, they decide how to prioritize search results. The order of results makes or breaks a search engine. This argument likely wouldn’t be happening if AI output were left several pages away from the top.

If someone is searching for reference images, it should not put AI generated output over photography and original art, because by its very nature AI generated images can’t be the ultimate origin of any kind of image.

You can’t weigh a factor you can’t detect, and the moment it can be detected that factor is trained out of the generators.

You’re essentially asking for the impossible.

Even if AI detecting tools are flawed, most pages that feature AI art have it explicitly stated in their own text, which it’s something their crawlers could definitely pick up on.

Its arguably the same topic and part of the problem. Sites that host digital copies of originals are underweighted relative to “popular” sites like Wikipedia or Pintrest or Imgur, which are more likely to host frauds or shitty duplicates.

This isn’t really a realistic answer, since the issue is that these images aren’t labeled as being AI generated, and constantly mixing generative content into everything we consume risks blurring reality for a lot of people.

Personally, I would prefer to see as little AI content as possible when searching for images unless that’s the kind of image I am looking for, and I would like those images to be labeled as such whenever possible.

deleted by creator

Internet was already unreliable source of information (for some stuff) without AI, just wait

Thank you for circling the largest photo, my eyes didn’t know where to go #bless 🙏

Not my original content, I just saw this post from Mastodon

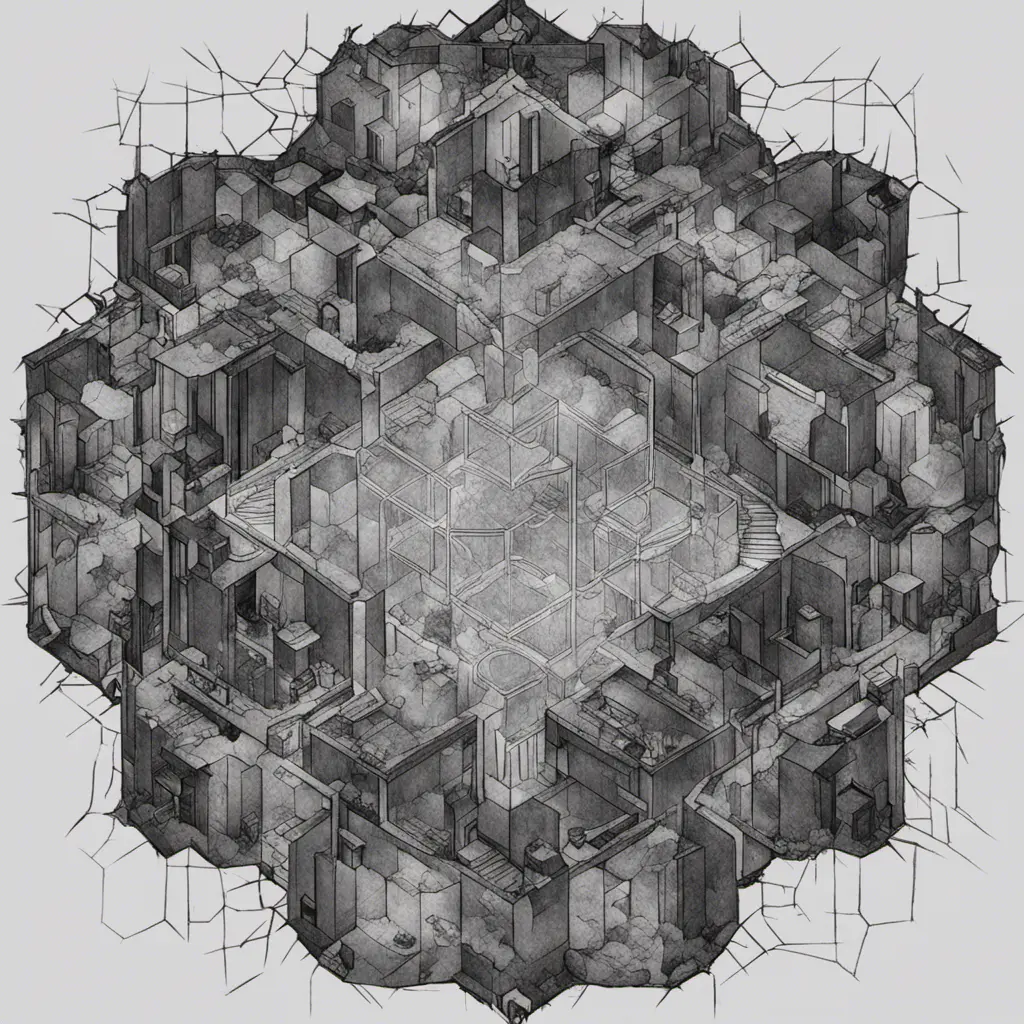

I wonder what would happen in the future as future AI’s get trained with AI generated images that they got from the internet. Would the generated images start to degrade or have somekind of distinct style pop out.

You mean like this?

Yeah something like that. I imagine it would be something like jpeg which degrades as you keep converting over and over. But not sure how would AI generated images would look like.

The only thing I can guarantee is a whole lotta fingers.

If you are interested in the subject, this is an interesting medium article.

And should you wish to go directly to the technical source, skipping Medium’s stupidity, here you go.

Not really. Check midjourney v6 generated images. I found many images, which look undistinctable from real images. So i dont see, why image generation should get worse. What matters is the dataset and only dataset. It doesnt matter if the model is trained on ai images, as long as the dataset is good

rip google search results

Just generate your own result with AI in seconds. ;)

Just wanted to point out that the Pinterest examples are conflating two distinct issues: low-quality results polluting our searches (in that they are visibly AI-generated) and images that are not “true” but very convincing,

The first one (search results quality) should theoretically be Google’s main job, except that they’ve never been great at it with images. Better quality results should get closer to the top as the algorithm and some manual editing do their job; crappy images (including bad AI ones) should move towards the bottom.

The latter issue (“reality” of the result) is the one I find more concerning. As AI-generated results get better and harder to tell from reality, how would we know that the search results for anything isn’t a convincing spoof just coughed up by an AI? But I’m not sure this is a search-engine or even an Internet-specific issue. The internet is clearly more efficient in spreading information quickly, but any video seen on TV or image quoted in a scientific article has to be viewed much more skeptically now.

Provenance. Track the origin.

Provenance. Track the origin.Easy to say, often difficult to do.

There can be 2 major difficulties with tracking to origin.

- Time. It can take a good amount of time to find the true origin of something. And you don’t have the time to trace back to the true origin of everything you see and hear. So you will tend to choose the “source” you most agree with introducing bias to your “origin”.

- And the question of “Is the ‘origin’ I found the real source?” This is sometimes referred to Facts by Common Knowledge or the Wikipedia effect. And as AI gets better and better, original source material is going to become harder to access and harder to verify unless you can lay your hands on a real piece of paper that says it’s so.

So it appears at this point in time, there is no simple solution like “provenance” and " find the origin".

Humans will need to use digital signatures eventually. Chains of verifiable claims from real humans would be used. Still doesn’t prove anything by itself, but it saves a ton of effort. That, plus verifiable timestamping.

And as AI gets better and better, original source material is going to become harder to access and harder to verify unless you can lay your hands on a real piece of paper that says it’s so.

One of the bright lines between Existing Art and AI Art, particularly when it comes to historical photos and other images, is that there typically isn’t a physical copy of the original. You’re not going to walk into the Louvre and have this problem.

This brings up another complication in the art world, which is ownership/right-to-reproduce said image. Blindly crawling the internet and vacuuming up whatever you find, then labeling it as you find it, has been a great way for search engines to become functional repositories of intellectual property without being exposed to the costs associated with reprinting and reproducing. But all of this is happening in a kind-of digital gray marketplace. If you want the official copy of a particular artwork to host for your audience, that’s likely going to come with financial and legal strings attached, making its inclusion in a search result more complicated.

Since Google leadership doesn’t want to petition every single original art owner and private exhibition for the rights to use their workers in its search engine, they’re going to prefer to blindly collect shitty knock-offs and let the end-users figure this shit out (after all, you’re not paying them for these results and they’re not going to fork out money to someone else, so fuck you both). Then, maybe if the outcry is great enough, they can charge you as a premium service to get more authentic results. Or they can charge some third party to promote their print-copies and drive traffic.

But there’s no profit motive for artistic historical accuracy. So this work isn’t going to get done.

Seems like it will be a bigger issue for wikipedia and journalists than google.

This isn’t new, I’ve seen ai in the Google images results for months now, close to a year.

Like this from last year - https://mastodon.social/@avadeaux@mastodon.nu/110547154739658264

Removed by mod

Reality is overrated.